AI Health

Friday Roundup

The AI Health Friday Roundup highlights the week’s news and publications related to artificial intelligence, data science, public health, and clinical research.

June 2, 2023

In this week’s Duke AI Health Roundup: does explainable AI help with decision-making?; “skeletal editing” opens new doors in chemistry; watching out for persuasive language in science; Charles Babbage and the use of data for control; hospital CT scanner illuminates hidden manuscripts; ChatGPT exceeds brief, writes fiction in court filing; many older patients use health portals; conference eyes growing threats from misinformation; much more:

AI, STATISTICS & DATA SCIENCE

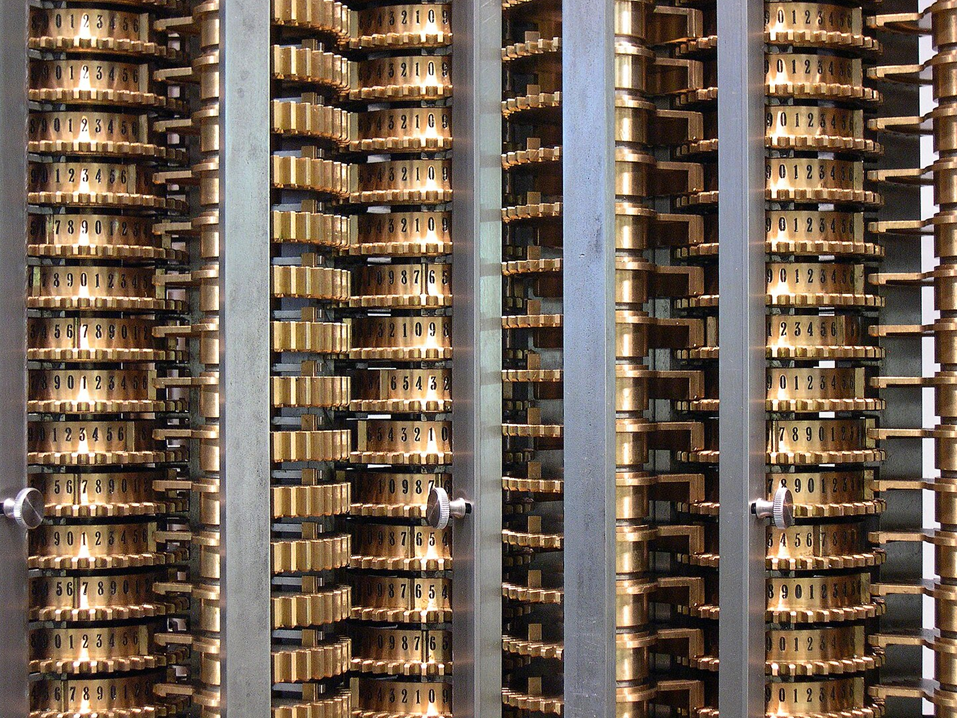

- “Worker surveillance and control was also a central feature of Babbage’s theories. A chapter in Babbage’s treatise advises readers on what ‘data’ those wishing to understand and manage factory operations should gather. There are clear parallels between Babbage’s data desires and the data and metrics that plantation owners, managers, and overseers shaped plantation labor practices to collect.” An essay in Logic(s) Magazine by AI expert Meredith Whittaker traces the skein of connections linking slavery, colonialism and plantation economics with the origins of modern industry – and early computing technologies as first realized by Charles Babbage.

- FREE STATISTICS! -> “I developed the lecture notes based on my ‘Causal Inference’ course at the University of California Berkeley over the past seven years. Since half of the students were undergraduates, my lecture notes only require basic knowledge of probability theory, statistical inference, and linear and logistic regressions.” Berkeley statistician Peng Ding has made a written version of his introductory course in causal inference available for download from arXiv.

- “Even though Tessa was built with guardrails, according to its creator Dr. Ellen Fitzsimmons-Craft of Washington University’s medical school, the promotion of disordered eating reveals the risks of automating human roles.” Vice’s Chloe Xiang reports on an eating disorder hotline’s ‘Tessa’ chatbot, which began offering dubious advice to callers shortly after it replaced human staffers.

- “We argue explanations are only useful to the extent that they allow a human decision maker to verify the correctness of an AI’s prediction, in contrast to other desiderata, e.g., interpretability or spelling out the AI’s reasoning process. Prior studies find in many decision making contexts AI explanations do not facilitate such verification. Moreover, most contexts fundamentally do not allow verification, regardless of explanation method.” A preprint article by Raymond Fok, available from arXiv, explores whether explainable AI provides advantages when assisting humans in decision-making.

- “Judge Castel said in an order that he had been presented with ‘an unprecedented circumstance,’ a legal submission replete with ‘bogus judicial decisions, with bogus quotes and bogus internal citations.’ He ordered a hearing for June 8 to discuss potential sanctions.” The New York Times’ Benjamin Weiser reports on fallout from the decision by an intrepid lawyer to use ChatGPT to conduct unsupervised legal research.

- “Many AI governance initiatives focus on the risks inherent to a particular deployment context, such as the “high-risk” applications listed in the draft EU AI Act. However, models with sufficiently dangerous capabilities could pose risks even in seemingly low-risk domains. We therefore need tools for assessing both the risk level of a particular domain and the potentially risky properties of particular models; this paper focuses on the latter.” A much-discussed paper by Shevlane and colleagues sketches out approaches for evaluating AI models for “extreme risks.” This Twitter thread by Connected By Data’s Jeni Tennison provides a critique of the underlying arguments, and some interesting points about anthropomorphizing AIs.

BASIC SCIENCE, CLINICAL RESEARCH & PUBLIC HEALTH

- “To get a sense of the challenge, consider that the small, carbon-based molecules that make up most of the world’s drugs typically contain fewer than 100 atoms, and are assembled piece by piece in a series of chemical reactions. Some connect large sections of the molecule’s skeleton; others decorate that skeleton with clusters of atoms to create the final product. But few methods can reliably tweak a molecule’s core skeleton once it has been assembled.” Nature’s Mark Peplow reports on the rise of “skeletal editing” in chemistry, in which single atoms can be swapped in and out of a molecule’s structure.

- “In recent decades, researchers have begun peering beneath book covers using noninvasive techniques to find medieval binding fragments and read what’s written on them. But many of those techniques have limitations, which prompted Dr. Ensley and his colleagues to try CT scanning, the same kind available in a hospital. The technique’s three-dimensional view solves the focus problems that plagued other methods, and a scan can be completed in seconds rather than the hours previously required.” The New York Times’ Katherine Kornei reports on researchers who are using hospital computed tomography (CT) scanners to identify fragments of medieval manuscripts that were repurposed as binding materials at the dawn of the print age in Europe.

- “Research in safety-net and community clinics can also help raise participation in trials by underrepresented groups by increasing the geographic diversity of trial sites. The majority of trials currently take place in academic medical centers, which are mostly located in cities. Yet 70% of Americans live two hours or more from an academic medical center. As a result, many people, particularly those who are under-resourced, cannot easily participate in clinical research unless it is conducted entirely by telephone or on the web.” An opinion article by Gloria Coronado and Leslie Bienen, appearing in STAT News (log-in required) examines the role that “safety net” clinics could play in expanding access to clinical trials for under-represented groups.

- “Overall, 78% of people age 50 to 80 have used at least one patient portal, up from 51% in a poll taken five years ago, according to findings from the University of Michigan National Poll on Healthy Aging. Of those with portal access, 55% had used it in the past month, and 49% have accounts on more than one portal.” A study conducted by the University of Michigan finds that many older patients are comfortable using web portals to access medical information, but there are some notable exceptions.

COMMUNICATION, Health Equity & Policy

- “Hospitals stepping in and providing child care makes sense for their employees, and it’s particularly commendable when health systems like Ballad Health open up their child care centers to the larger community, much of which is located in what is considered a ‘child care desert.’…But hospitals shouldn’t have to do this, and it’s not a solution we can expect to scale to other industries — or even other health centers.” A STAT News viewpoint article by Rebecca Gale examines the practice of hospitals providing childcare for their workers – and explains why it may be stopgap measure at best.

- “…when writing a scientific article, authors must use these ‘persuasive communication devices’ carefully. In particular, they must be explicit about the limitations of their work, avoid obfuscation, and resist the temptation to oversell their results.” A paper published in eLife by Corneille and colleagues describes the dangers of relying on persuasive writing in scientific concepts, to the point that it may amount to a thumb on the scales.

- “Speakers described how the attention economy’s business model intersects with the very human desire to belong — fueling an information environment riddled with misinformation and disinformation….Ceylan found the rewards — attracting attention and recognition in the form of likes and comments — of sharing online create habits that drive people’s behavior more than their motivations do.” At Axios, Alison Snyder provides a rundown of a recent National Academy of Sciences meeting that addressed growing concerns about the impact of misinformation on science and on society at large.

- “Fox says the study shows that reviewers are not prejudiced against researchers from low-income or non-English-speaking countries. Rather, authors from rich nations get a boost when reviewers know their identity. He suggests that researchers from wealthy nations benefit from prestige bias — whereby reviewers expect work from researchers from certain institutions or countries to be of high quality and give deference to them.” Nature’s Natasha Gilbert examines the evidence that anonymized peer review results in a more equitable review process.