AI Health

Friday Roundup

The AI Health Friday Roundup highlights the week’s news and publications related to artificial intelligence, data science, public health, and clinical research.

May 12, 2023

In this week’s Duke AI Health Friday Roundup: what the medical world still doesn’t understand about AI; meeting the needs of small vulnerable newborns; embracing failure in science; Google goes big on generative AI; machine learning for hunting shipwrecks in Thunder Bay; regression vs ML for breast cancer prognostication; data availability statements often disappoint; EU committees signal move toward stronger data privacy; much more:

AI, STATISTICS & DATA SCIENCE

- “’One of the things that I find extremely jarring is the extreme zoom laser focus on ChatGPT, per se,’ said Saria, who is also the director of the Machine Learning and Healthcare Lab at Johns Hopkins University. ‘People are thinking of ChatGPT as like a box. The progress in the field has not been this box,’ she said.” In an article for STAT News, Brittany Trang summarizes comments from a conversation with health AI experts who warn that the field of medicine is laboring under some misapprehensions about recent developments in AI.

- “A few executives I spoke to mentioned a tension in AI between “factual” and “fluid.” You can build a system that is factual, which is to say it offers you lots of good and grounded information. Or you can build a system that is fluid, feeling totally seamless and human. Maybe someday you’ll be able to have both. But right now, the two are at odds, and Google is trying hard to lean in the direction of factual. The way the company sees it, it’s better to be right than interesting.” The Verge’s David Pierce reports on Google’s unveiling of its new approach to search that incorporates generative AI. And in other Google news, STAT’s Casey Ross reports on Google’s move to incorporate large language model AI into medical imaging analysis.

- “Although the regression approaches yielded models that discriminated well and were associated with favourable net benefit overall, the machine learning approaches yielded models that performed less uniformly. For example, the XGBoost and neural network models were associated with negative net benefit at some thresholds in stage I tumours, were miscalibrated in stage III and IV tumours, and exhibited complex miscalibration across the spectrum of predicted risks.” In a research article recently published in BMJ, Clift and colleagues present findings from a database cohort study that compared regression methods with machine learning approaches for estimating 10-year risk for breast cancer.

- “We tested one compound, BRD-K56819078, in aged mice and found that it significantly decreased senescent cell burden and mRNA expression of senescence-associated genes in the kidneys. Our findings underscore the promise of leveraging deep learning to discover senotherapeutics.”A research article by Wong and colleagues published in Nature Aging describes the use of a deep neural net machine learning approach to identifying candidate “senolytics” – compounds that can affect cellular aging processes (H/T @AI_4_Healthcare).

- “Many journals now require data sharing and require articles to include a Data Availability Statement. However, several studies over the past two decades have shown that promissory notes about data sharing are rarely abided by, and that data is generally not available upon request. This has negative consequences for many essential aspects of scientific knowledge production, including independent verification of results, efficient secondary use of data, and knowledge synthesis.” In a preprint available from PsyArXiv, Ian Hussey takes stock of data availability statements in the scientific literature, and whether they measure up to their promises.

BASIC SCIENCE, CLINICAL RESEARCH & PUBLIC HEALTH

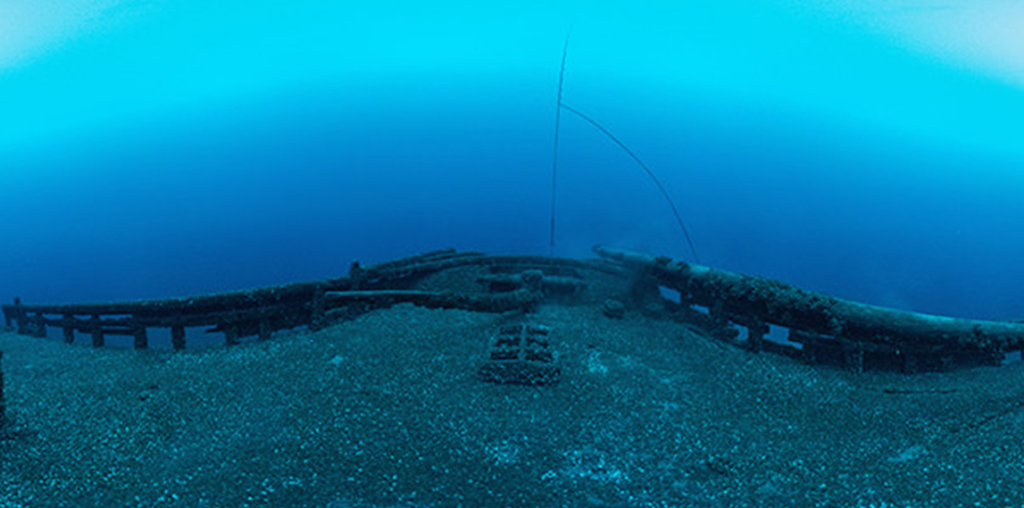

- “In the broadest sense, training a machine learning system to search for shipwrecks is similar to training an autonomous car to safely drive on the streets—makers train both models by showing them images of things they’re likely to encounter out in the real world…The particulars of training an underwater search vehicle, however, are much more complicated. That’s chiefly because there just isn’t a lot of underwater imagery out there, particularly of unusual features like shipwrecks.” A news article by Gabe Cherry (with photos by Marcin Szczepanski) at the University of Michigan Engineering News website describes an ambitious project to train autonomous underwater robots to spot shipwrecks and other submerged features in the Lake Huron Thunder Bay National Marine Sanctuary.

- “Conner’s story offers an early glimpse of how these drugs, often so transformative at first, can fade as the years wear on. And it underscores the need to develop new technologies and strategies that can allow doctors to administer these treatments multiple times over the course of patients’ lives, topping their muscles off with new genes if and when they run low.” In a feature article for STAT News, Jason Mast explores the early promise and frustrating complexities of treating Duchenne muscular dystrophy with gene therapies.

- “She and others say that despite growing awareness of autism and acceptance of neurodiversity in many parts of society, the academic and clinical research community still generally considers autism a disease, a disorder, a problem to be solved. The focus of research and therapies, they say, is often on suppressing autistic traits and adopting neurotypical behaviours, rather than developing services and programmes to support autistic people.” A Nature news feature by Emiliano Rodríguez Mega explores the discontent that some members of the autistic community are expressing about research priorities in autism studies – and sometimes with the basic framing of the issues at stake.

- “The foundations of human wellbeing are laid before birth. Unfortunately, many babies experience adversities during this intrauterine period. Consequently, they can be born preterm or suffer fetal growth restriction and be born small for gestational age (SGA). Both preterm birth and fetal growth restriction can result in low birthweight (LBW). Children who are born preterm, SGA, or with LBW have a markedly increased risk of stillbirth, neonatal death, and later childhood mortality….Prevention of preterm and SGA births is critical for global child health and for societal development.” A special report from the Lancet addresses different challenges to infant health, included under the overarching umbrella category of “small vulnerable newborns.”

COMMUNICATION, Health Equity & Policy

- “Should we talk more openly with students about failure? When I quietly left research, frustrated at what felt like my lack of accomplishment, was this a typical experience? How often do we inadvertently discourage students from persisting in science, simply by omitting honest descriptions of the failure inherent to the research process? Research is messy and full of failed attempts. Trying to protect students from that reality does them a disservice.” An essay in Science by Jennifer Lanni explores the long-term benefits of allowing graduate students to wrestle with failure – and to understand it as a natural part of the scientific process.

- “Scientific conferences can inspire researchers to take new directions in their work and help them to meet new employers and collaborators. The opportunities on offer are not, however, equally open to all. For scientists who are members of under-represented groups, conferences can be unwelcoming environments, where they are repeatedly interrupted, face hostile questioning, receive comments on their appearance, are excluded from leadership roles and worse because of their gender, skin colour or other aspects of their identity.” A career feature article in Nature includes a discussion with underrepresented minority researchers who describe their experiences at scientific conferences – and the pressures that come with being the only visible member of a minority in the room.

- “Our investigation found that the location and format of usage rights language differed across platforms and between full-text article formats on the same platforms. We also found that usage rights are not shown in search results or offered as limiters or filters and there is inconsistent use of CC badges/icons. HTML layouts differed the most across platforms, making it particularly challenging to find usage rights information.” In a post for Scholarly Kitchen, Lisa Janicke Hinchliffe and Annika Deutsch present results from a study that evaluated how easy (or difficult) it was to locate clear information about permissions regarding reproduction, sharing, and adaptation of scholarly publications released under different kinds of open access (OA) licenses.

- “The European Parliament’s lead committees send a clear signal that certain uses of AI are simply too harmful to be allowed, including predictive policing systems, many emotion recognition and biometric categorisation systems, and biometric identification in public spaces.” A news item posted at the European Digital Rights (EDRi) website reports on deliberations by a pair of governance committees within the European Union Parliament that signal a move toward stronger privacy protections that would limit the use of certain kinds of AI systems, including “predictive policing” algorithms and facial and biometric identification.