AI Health

Friday Roundup

The AI Health Friday Roundup highlights the week’s news and publications related to artificial intelligence, data science, public health, and clinical research.

May 13, 2022

In today’s Duke AI Health Friday Roundup: automatic bias detection; integrating AI into clinical workflows; the pitfalls of ancestry data; going beyond fairness in AI ethics; figuring out the “why” of some cancers; why preprints are good for patients, too; transparency and reform for medical debt; US public still esteems scientists; urging social media to open its book for researchers; imaging the invisible at cosmic scales; much more:

Deep Breaths

- “Leonardo’s rule says that the thickness of a limb before it branches into smaller ones is the same as the combined thickness of the limbs sprouting from it. But according to Grigoriev and his colleagues, it’s the surface area that stays the same….Using surface area as a guide, the new rule incorporates limb widths and lengths, and predicts that long branches end up being thinner than short ones.” In an article for Science News, James Riordan reports on a recent update to “Leonardo’s (da Vinci) rule,” a not-quite-right formula for that describes how trees grow and branch.

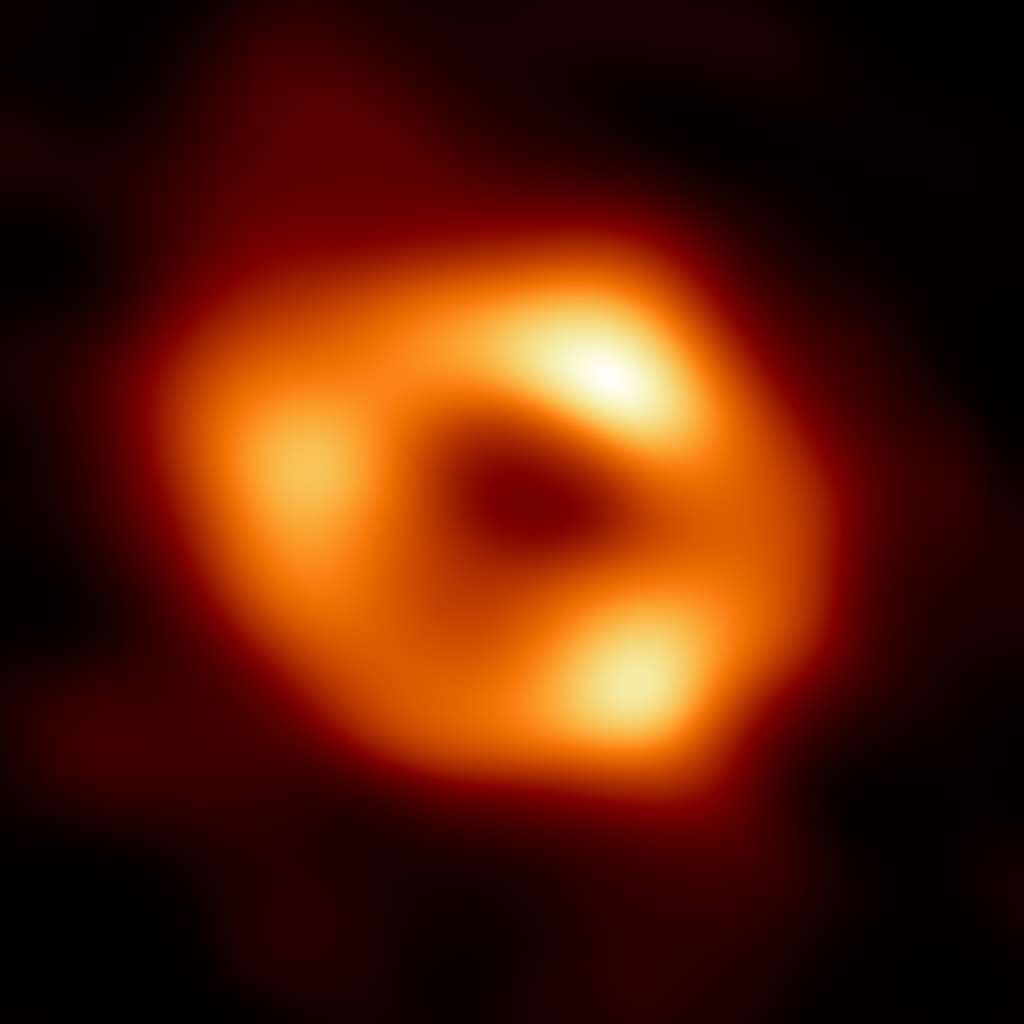

- A team of scientists working with the Event Horizon Telescope project has released the first image of the supermassive black hole at the center of our galaxy. Although Sagittarius A* is approximately 4 million solar masses, it is however something of a peewee compared with the first black hole imaged by the Event Horizon Telescope, the 6.5 billion solar mass Messier 87*. Despite the large differential in mass, the images of the two galactic black holes appear remarkably similar. Quanta’s Jonathan O’Callaghan has the story.

AI, STATISTICS & DATA SCIENCE

- “AI progress can stall when end users resist adoption. Developers must think beyond a project’s business benefits and ensure that end users’ workflow concerns are addressed. An article published at the Sloan Review by authors from MIT’s Sloan School of Management and the Duke Institute for Health Innovation explores the need to properly socialize AI interventions with the people who will be using them before unleashing them in real-world settings.

- At his blog, Vanderbilt statistician Frank Harrell presents a detailed, step-by-step guide to creating reproducible research reports using the R tools Markdown and Quarto.

- “Classifiers are biased when trained on biased datasets. As a remedy, we propose Learning to Split (ls), an algorithm for automatic bias detection. Given a dataset with input-label pairs, ls learns to split this dataset so that predictors trained on the training split generalize poorly to the testing split. This performance gap provides a proxy for measuring the degree of bias in the learned features and can therefore be used to reduce biases.” A preprint by Bao and colleagues, available from arXiv, presents a method for automatic dataset bias detection.

- “There is a growing number of published studies on public attitudes towards data. However, in 2020 a comprehensive academic review of this research identified how the complex, context-dependent nature of public attitudes to data renders any single study unlikely to provide a conclusive picture. “ An evidence review published by the Ada Lovelace Institute examines UK public attitudes regarding data regulation.

- “When you think about AI ethics, the first thing that comes to mind is fairness and bias. And, yes, those are important, but that’s not the only thing. Fairness and bias doesn’t even apply in every possible scenario. Some of the work that I’ve done in the past was very much around predicting jet engine failure, or predicting how much power will a wind turbine generate…And those are scenarios where it’s not so much about fairness and bias, but it is more about, say, reliability — the robustness, the safety and security aspect of it.” An interview at Protocol with Trustworthy AI author Beena Ammanath broadens the conversation about ethical AI to include more than just discussions of potential bias.

BASIC SCIENCE, CLINICAL RESEARCH & PUBLIC HEALTH

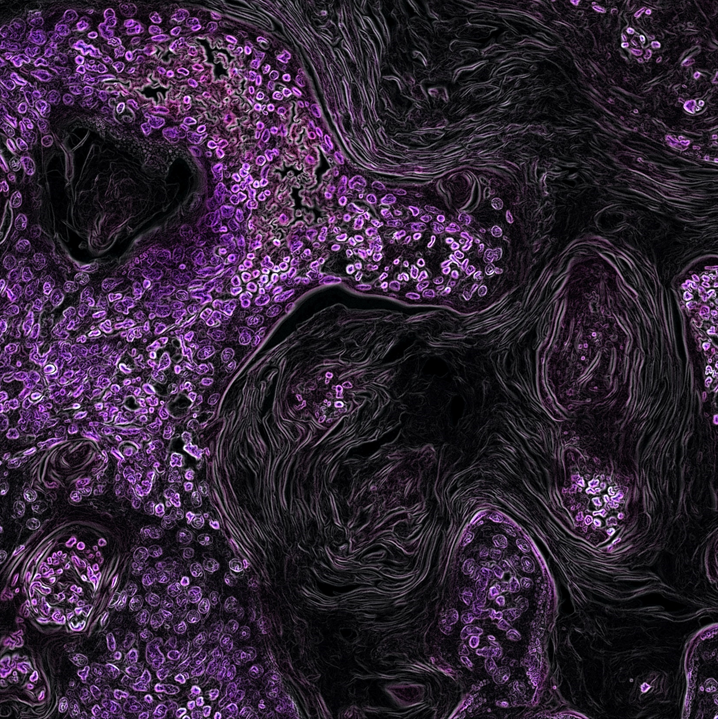

- “The distinction matters because of the implications for cancer prevention: If a cancer is mostly caused by toxic exposures, then public health efforts should focus on strategies to prevent those exposures. But if a cancer is mostly the result of random mutations, then little can be done to prevent it, and efforts might instead focus on early detection and treatment.” An article in Scientific American by Viviane Caller profiles recent modeling advances that may help scientists and physicians determine whether point mutations in different cancers are the result of random variation, or have an attributable cause, such as a environmental exposure to a carcinogen.

- “The danger in turning to genetic ancestry stems from the dominant way ancestry is currently used within genetics, as continental categories such as African ancestry, European ancestry, and the like. These categories are easy to conflate with racial categories….This confuses a sociopolitical concept with a biological one. This well-meaning ‘solution’ ends up perpetuating the same problem inherent in racial categories: that humans can be sorted on the basis of their biology into a small number of types.” An article in STAT News by Anna C.F. Lewis explains why attempting to substitute genetic ancestry for racial categories in biomedical research may simply end up perpetuating problems of bias and mistaken inference.

- “The researchers found substantial differences in the number of days for which the participants shed virus capable of infection: one participant excreted viable virus from their nose for nine days, whereas nine participants showed no detectable levels of infectious virus throughout the testing period. Modelling estimated that the most-infectious people shed more than 57 times more virus over the course of infection than did the least-infectious individuals.” An article in Nature highlights research exploring the question of why some people with COVID seem to shed much more of the virus than others, making them potentially more infectious.

- “Inclusion of underserved populations in PRO data collection will help promote equitable healthcare and reduce health data poverty. Co-design of systems with representative patient input will be central to their successful realization. Resource implications must be considered, and novel approaches evaluated, to promote shared learning and best practice.” A commentary published in Nature Medicine by Calvert and colleagues makes the case for ensuring that patient-reported health outcomes are inclusive and equitable, and lays out steps for making that happen.

COMMUNICATIONS & DIGITAL SOCIETY

- “In a laboratory study and a field experiment across five countries (in Europe, the Middle East and South Asia), we show that videoconferencing inhibits the production of creative ideas. By contrast, when it comes to selecting which idea to pursue, we find no evidence that videoconferencing groups are less effective (and preliminary evidence that they may be more effective) than in-person groups.” A study published in Nature by Brucks and Levav investigated the effects that the wave of pandemic-related videoconferencing has had on the quality of collaboration, compared with in-person meetings.

- “…the preprint system has come of age, demonstrating huge value in rapidly communicating important research findings….A degree of immediate peer review is also available by means of the preprint comments section and from colleagues via social media. The full peer-reviewed manuscripts usually appear many weeks or even months later. I cannot envisage a future without such rapid dissemination of new evidence.” A viewpoint article by Peter Horby published in Nature Medicine endorses the use of preprint articles to communicate research findings as, on balance, a good thing for patients.

- “Researchers have measured Americans’ understanding of S&T for decades and have noted a pattern of positive perceptions about science and scientists over time. This report describes that pattern and considers how those perceptions vary between people with different characteristics.” A National Science Foundation report authored Brian Southwell and Karen White provides a snapshot of US public attitudes about science and scientists as measured by survey data.

- “People of color are overrepresented in low-paying frontline jobs that increase their exposure, Furr-Holden notes; they also face unequal access to health care and have more underlying conditions that make them more vulnerable to begin with. All of these are ongoing factors that raise the risk of infection and death.” NPR’s Maria Godoy scrutinizes the toll of the COVID pandemic, and uncovers distinct patterns of inequality in the impact.

POLICY

- “…studies of medical debt litigation are limited by the difficulty of obtaining relevant data, which is necessary to guide urgently needed reform. To better understand medical debt litigation, uniform, searchable electronic dockets should be adopted by all state courts, and standardized reporting requirements should be instituted for all health care entities.” An article in Health Affairs Forefront by Shultz and colleagues offers a sense of urgency for reforming how hospitals and health systems go about collecting medical debt – including how they document and report debt collection activities.

- “The only way to understand what is happening on the platforms is for lawmakers and regulators to require social media companies to release data to independent researchers. In particular, we need access to data on the structures of social media, like platform features and algorithms, so we can better analyze how they shape the spread of information and affect user behavior.” A viewpoint article published in Scientific American by Renée DiResta, Laura Edelson, Brendan Nyhan, and Ethan Zuckerman asserts that it is high time that social media companies permitted independent researchers to have a look under the hood at how their apps and algorithms are actually operating.

- “Vague mandates won’t work, but with clear frameworks, we can weed out AI that perpetuates discrimination against the most vulnerable people, and focus on building AI that makes society better.” An opinion article in Nature by NYU sociologist Mona Sloane lays out a roadmap for developing AI systems that prioritize fairness.