AI Health

Friday Roundup

The AI Health Friday Roundup highlights the week’s news and publications related to artificial intelligence, data science, public health, and clinical research.

March 29, 2024

In this week’s Duke AI Health Friday Roundup: White House issues policies for AI use by federal agencies; NEJM AI requires registration of interventional AI studies; 3D specimen imaging project reaches finish line; insights into the human immune system, courtesy of COVID; using “digital twins” in biomedical research; hallucinated software gets called by real computer code; who should be responsible for policing integrity in scientific publication?; much more:

AI, STATISTICS & DATA SCIENCE

- “…any trial that starts enrolling patients after January 1, 2025 and that uses an AI intervention as part of its approach to answer a clinical question will need to be registered in a trial database that meets the WHO ICTRP specification or contributes data to this WHO platform if the final work is to be considered for publication in NEJM AI.” In the current issue of NEJM AI, the editors announce a stance on AI trial registration analogous to the one in force across most biomedical literature for manuscripts reporting on clinical trials.

- “…we can’t get to A.I.-powered healthcare without compelling prospective clinical trial evidence in the real world of medical care, with diverse participants. That’s what we’re missing now, and hopefully will start to see soon. I am and remain optimistic for A.I.’s transformation of life science and medicine in the years ahead. It’s still early.” At his Ground Truths Substack page, Eric Topol surveys some recent developments in biomedical AI.

- “We propose a novel, deep-learning, generative model of patient timelines within secondary care across mental and physical health, incorporating interoperable concepts such as disorders, procedures, substances, and findings. Foresight is a model with a system-wide approach that targets entire hospitals and encompasses all patients together with any biomedical concepts (eg, disorders) that can be found in both structured and unstructured parts of an EHR.” A research article by Kraljevic and colleagues, published in Lancet Digital Health, describes a predictive model based on a generative transformer that utilizes EHR data and free-text chart notes.

- “One of the biggest challenges to precision medicine and the use of MDTs [medical digital twins] is the biological heterogeneity of patients. To account for this, we will probably need to develop higher-resolution models than the ones that are currently available, given our knowledge of human biology and the data to capture it. By analogy, the accuracy of numerical weather prediction models over longer forecasting windows provides a good paradigm.” A perspective article by Laubenbacher and colleagues explores the use of “digital twins” in biomedical research as part of a theme issue of Nature Computational Science devoted to the topic.

BASIC SCIENCE, CLINICAL RESEARCH & PUBLIC HEALTH

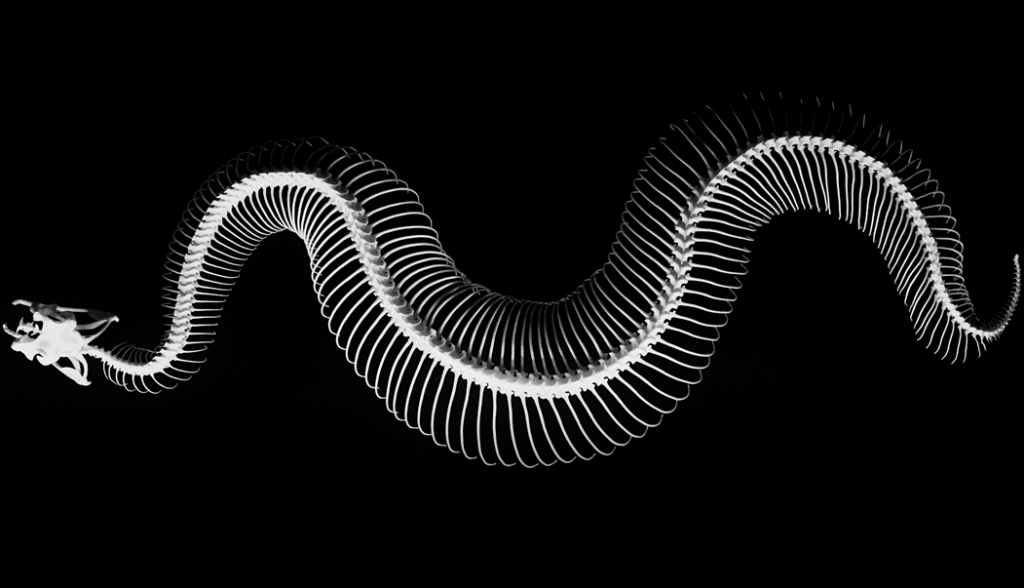

- “The physical specimens and objects in natural history museums require people to give them meaning. Access to natural history collections has increased over the past few hundred years, from their inception as cabinets of curiosity in aristocratic households to public museums exhibiting subsets of their collection to spark wonder and curiosity. Yet, traditionally, these specimens and objects were collected by specialists and for” An article by Blackburn and colleagues in the journal Bioscience reports on the completion of a project that produced an open-access repository containing high-resolution digital scans of vertebrate species from numerous natural history specimen collections.

- “This is hardly the first time a new pathogen has made its way from nature into humans. For instance, four coronaviruses normally described as ‘common cold coronaviruses’ at some point in the past made the jump from bats, mice, or some other species into people. But these events were either unobserved at the time or took place before scientists had the tools to identify what was happening, let alone chart in exquisite detail their impact on immune systems.” In an article for STAT News, Helen Branswell examines the ways that the COVID pandemic afforded a rare glimpse into the workings of the immune system.

- “The period that has defined the development of the artificial pancreas spans 60 years, but most achievements have occurred in the past 20 years. This pace of clinical progress has provided people with diabetes on intensive insulin treatment with a powerful tool to achieve a better metabolic control.” A commentary in Nature Medicine by Phillip and colleagues surveys the history and future directions of efforts to develop an artificial pancreas for patients with type 1 diabetes mellitus.

COMMUNICATION, Health Equity & Policy

- “…the White House Office of Management and Budget (OMB) is issuing OMB’s first government-wide policy to mitigate risks of artificial intelligence (AI) and harness its benefits – delivering on a core component of the President Biden’s landmark AI Executive Order. The Order directed sweeping action to strengthen AI safety and security, protect Americans’ privacy, advance equity and civil rights, stand up for consumers and workers, promote innovation and competition, advance American leadership around the world, and more.” This week, the White House has announced a set of Office of Management and Budget policies that establish guidelines for the use of AI technologies by federal agencies.

- “Despite the relatively low number of incidents, not checking every accepted paper puts a journal at risk of missing something and winding up on the front pages of Retraction Watch or STAT News. This is not where we want to be and it opens you up to a firestorm of criticism — your peer review stinks, you don’t add any value, you are littering the scientific literature with garbage, you are taking too long to retract or correct, etc….The bottom line is that journals are not equipped with their volunteer editors and reviewers, and non-subject matter expert staff to police the world’s scientific enterprise.” A post at Scholarly Kitchen by Angela Cochran makes the argument that scholarly journals face an impossible task in trying to prevent lapses in scientific integrity from making it into published literature – and that institutions may need to shoulder more of the burden.

- “Several big businesses have published source code that incorporates a software package previously hallucinated by generative AI….someone, having spotted this reoccurring hallucination, had turned that made-up dependency into a real one, which was subsequently downloaded and installed thousands of times by developers as a result of the AI’s bad advice, we’ve learned. If the package was laced with actual malware, rather than being a benign test, the results could have been disastrous.” A story by the Register’s Thomas Claburn describes an experiment demonstrating how a software application “hallucinated” by a generative AI was actually incorporated into source code – leaving an open door for hackers to insert malicious software packages.